HTTP/3 – Why should I care?

My talk from EuroPython 2020.

Abstract

HTTP is the foundation of the current web, and HTTP/3 is the upcoming major version of it. The new version is built on top of the QUIC transport protocol, originally developed in Google.

HTTP/3 can change the Internet as we know it today. Since its beginning in the 90s, HTTP transfers data over TCP to ensure reliable connections between clients and servers. QUIC is a TCP alternative, reimplemented on top of unreliable and connectionless UDP.

Proprietary Google implementation of QUIC is deployed worldwide and supported by Chrome browsers. Future HTTP/3 will be hopefully standardized by IETF soon, but many diverse implementations are available already today.

HTTP/3 improves performance and increases privacy. The switch from TCP to QUIC allows us to address the inherent limitations of previous HTTP versions. The QUIC protocol is completely encrypted, including traffic control headers, which are visible in TCP.

This talk introduces HTTP/3 and the underlaying QUIC protocol. It shows both advantages and disadvantages of the new technology, and it describes the landscape of the current implementations and suggests what you can try today.

Video

Transcript

Here is a transcript of my talk.

PDF version of my slides is available too, but the slide deck is meant for illustration, so it may not be useful alone.

Hi everybody, welcome!

I hope that you are curious about HTTP/3 and wish you to enjoy this talk.

This is an exceptional opportunity to speak at EuroPython.

My name is Miloslav Pojman and I am streaming from Prague.

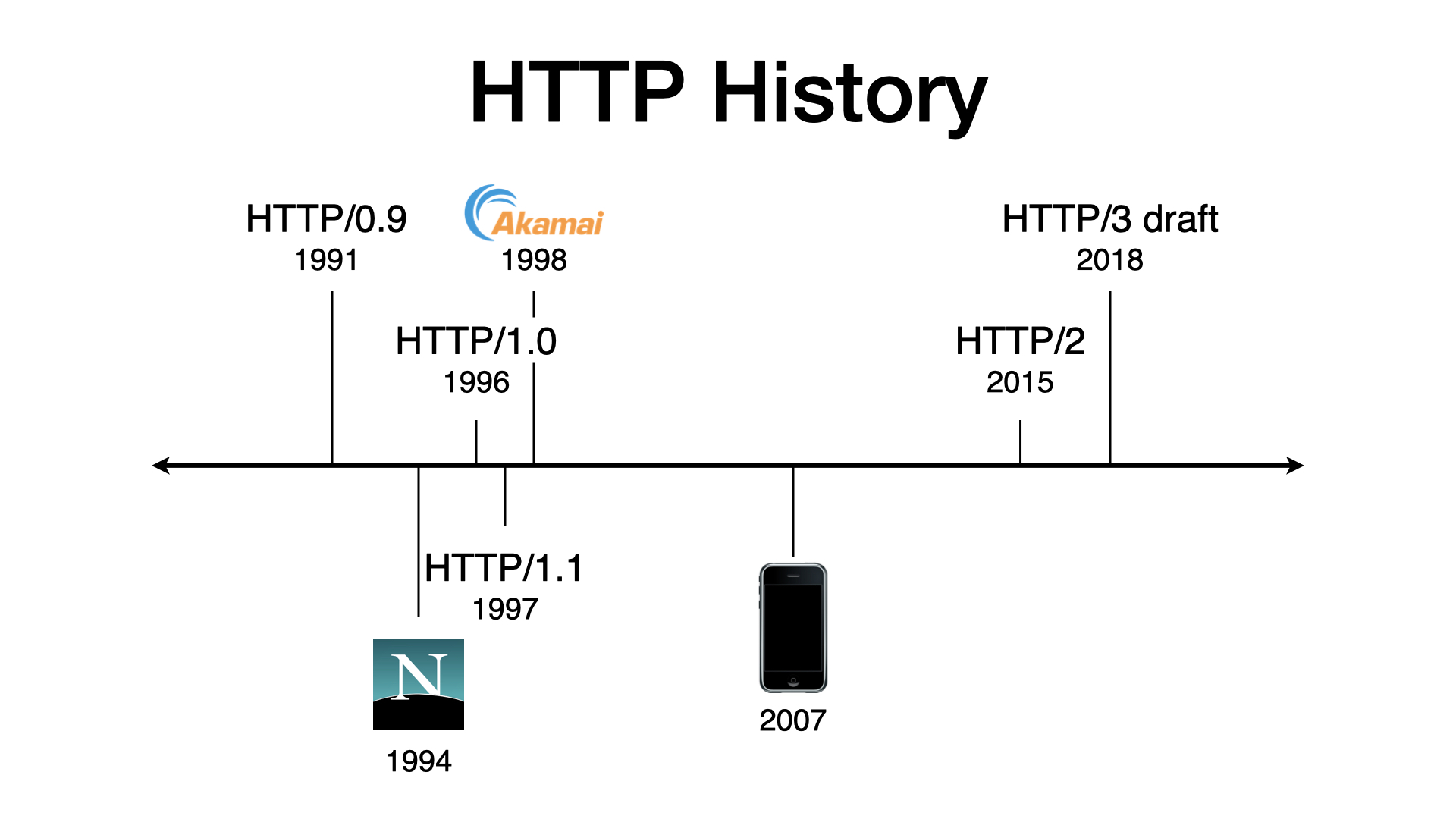

I work for Akamai Technologies in a protocol optimization team.

Akamai runs one of the largest CDNs in the world with more than quarter-million servers around the globe. Our peak traffic is more than 150 Tbps.

I am speaking here about HTTP/3 because our protocol optimization team enabled QUIC for the whole Akamai network. If you are wondering how QUIC is related to HTTP/3 then you are watching the right stream.

In the next 40 minutes (approximately), I will explain what HTTP/3 and QUIC are.

HTTP

I assume that you have at least rough idea what happens under the hood when you visit your favorite internet site.

But don’t be afraid if you are not an expert in network protocols. This is an introductory talk and I will start it with a quick recap to make everything clear.

When you visit a website, your browsers issues HTTP requests. HTTP is a simple text protocol. You can speak HTTP yourself, without a web browser.

You open a TCP connection to a server (for example using netcat or telnet), write your request, hit the return key twice, and the server should send a response back. That’s all. It’s that simple.

I’m sure that most of you know this.

This is HTTP/1.1 from 1997 and it still works well. Most websites today still use this version from the '90s.

The difference from the '90s is that most traffic today is encrypted using HTTPS. HTTPS means HTTP over TLS. An HTTPS connection is encrypted using TLS.

That’s the difference. But besides that nothing changes. Once I open an encrypted connection (for example using OpenSSL), I can write my HTTP request just as I did before.

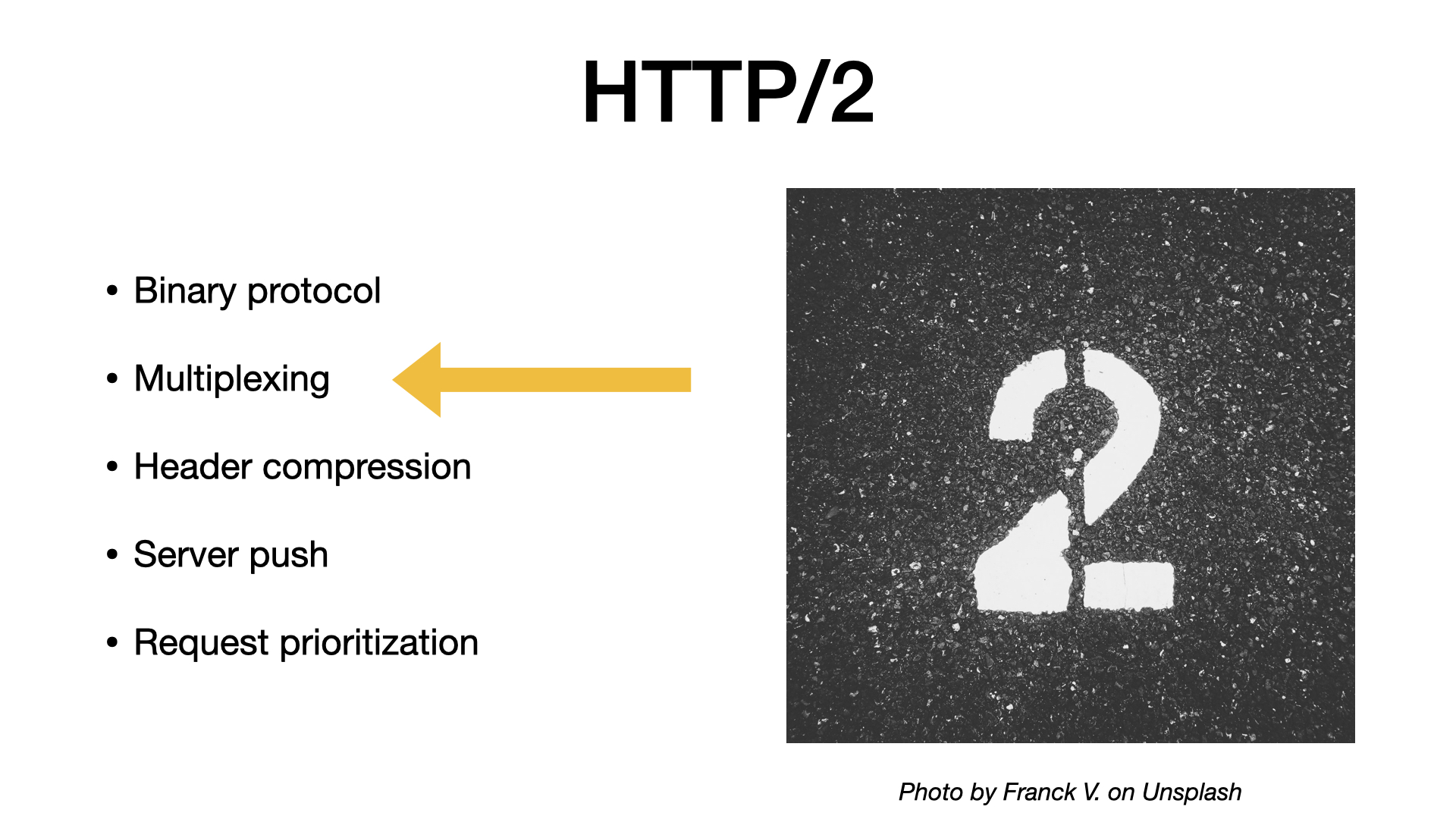

If you want something more sophisticated, we should move to 2015 when HTTP/2 was published. Unlike the first version, HTTP/2 is a binary protocol, so I’m sorry, I won’t show you an example here.

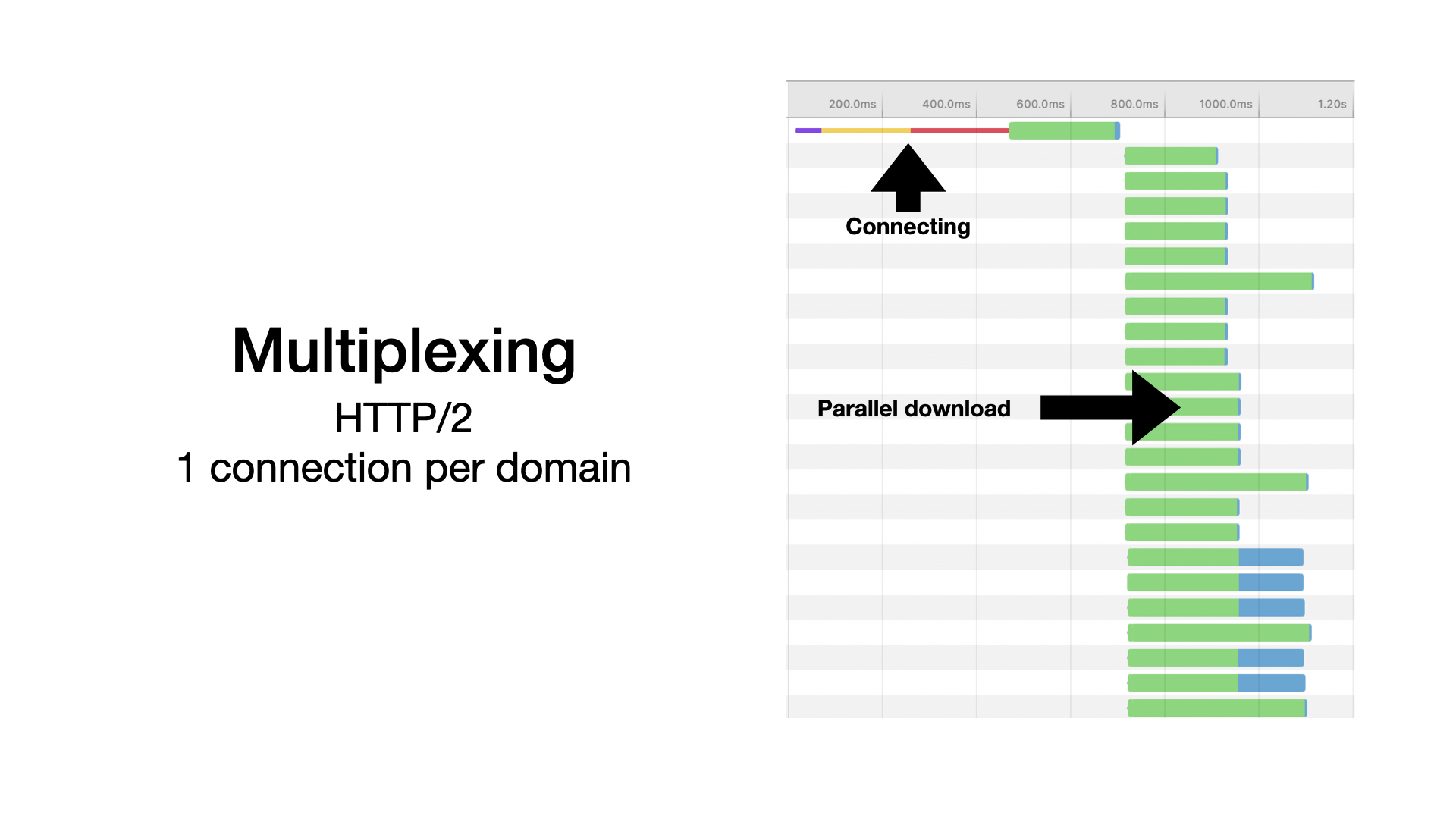

HTTP/2 has many nice properties. The most important one is that it supports multiplexing – you can download many objects in parallel over a single connection.

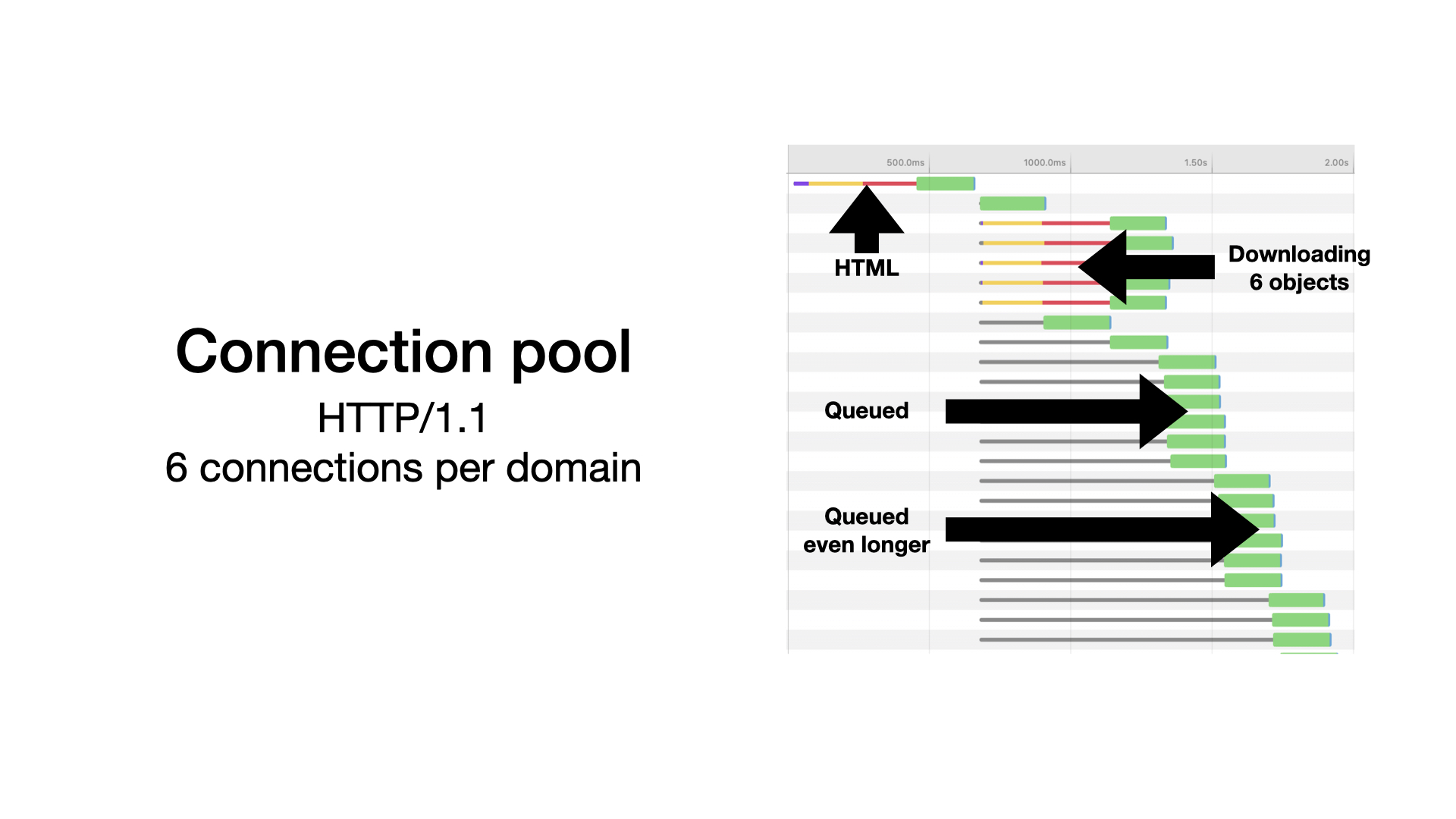

To see why the multiplexing is useful, let’s look how a web page is loaded using HTTP/1.

With HTTP/1, to allow at least some parallelism, browsers open multiple connections. Typically, browsers open six connections per domain.

But sites today usually consist of much more than 6 objects. A few hundred requests are not an exception. Images, CSS styles, JavaScript, Ajax requests, fonts, analytics, ads, …

Look at the diagram at the slide:

- First, an HTML page is loaded. That’s one request.

- Then five more connections are opened and 6 objects are downloaded in parallel. The yellow and red lines measure the time necessary for connection setup.

- Next objects are queued until previous requests finish.

- And so on. Further requests are queued for even longer.

In the diagram, the green bars are waiting times, a latency. A browser sends a request and waits for a response. At the end of each line, there are narrow blue bars measuring time spent downloading, transferring data. You can see in our example that for small objects the download times can be negligible compared to the waiting, compared to the latency.

The described issue called HTTP head-of-line blocking. Requests are blocked until one of six connections is available.

To minimize the consequences of the head-of-line blocking, we invented JavaScript bundles or image sprites.

The idea is simple. If you download fewer objects, you issue fewer requests and you spent less time waiting. But you can imagine that downloading everything in one blob may not be the optimal solution. You are likely be downloading much more than you need.

With HTTP/2, browsers open 1 connection per domain only.

Then, they use it to download all objects concurrently.

The blue download times can be longer (because connection is shared), but avoiding the unnecessary waiting is a game-changer.

Today, most top sites use HTTP/2. When performance matters, HTTP/2 should almost always enabled. Obviously, you should alway measure what works best for you use case.

With HTTP/1, you can be limited by the latency, by the distance to the server. With HTTP/2, this limitation is suppressed, allowing you to utilize most of the available bandwidth.

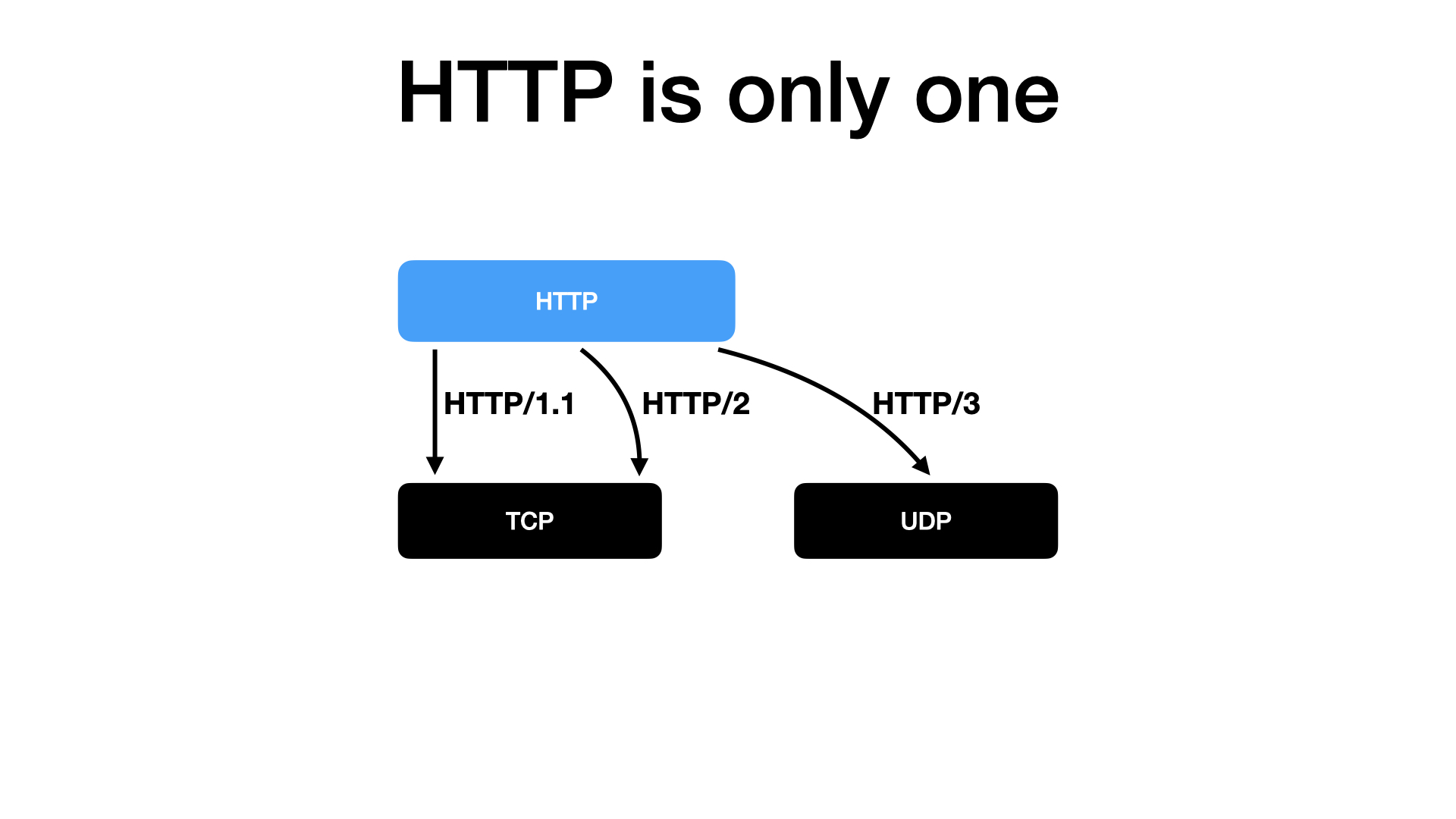

So, we have two important HTTP versions.

We have HTTP/1, which is more than 20 years old, and is still good enough for most sites. And we have HTTP/2, which was standardized only 5 years ago, and which improves performance a lot.

In this situation, it is fair to ask: Why we need a new protocol today? What new can HTTP/3 offer us?

The answer is that HTTP/3 is completely different.

Transport protocols

It replaces foundations of the internet.

That’s a brave statement. What are the internet foundations? How does the Internet work?

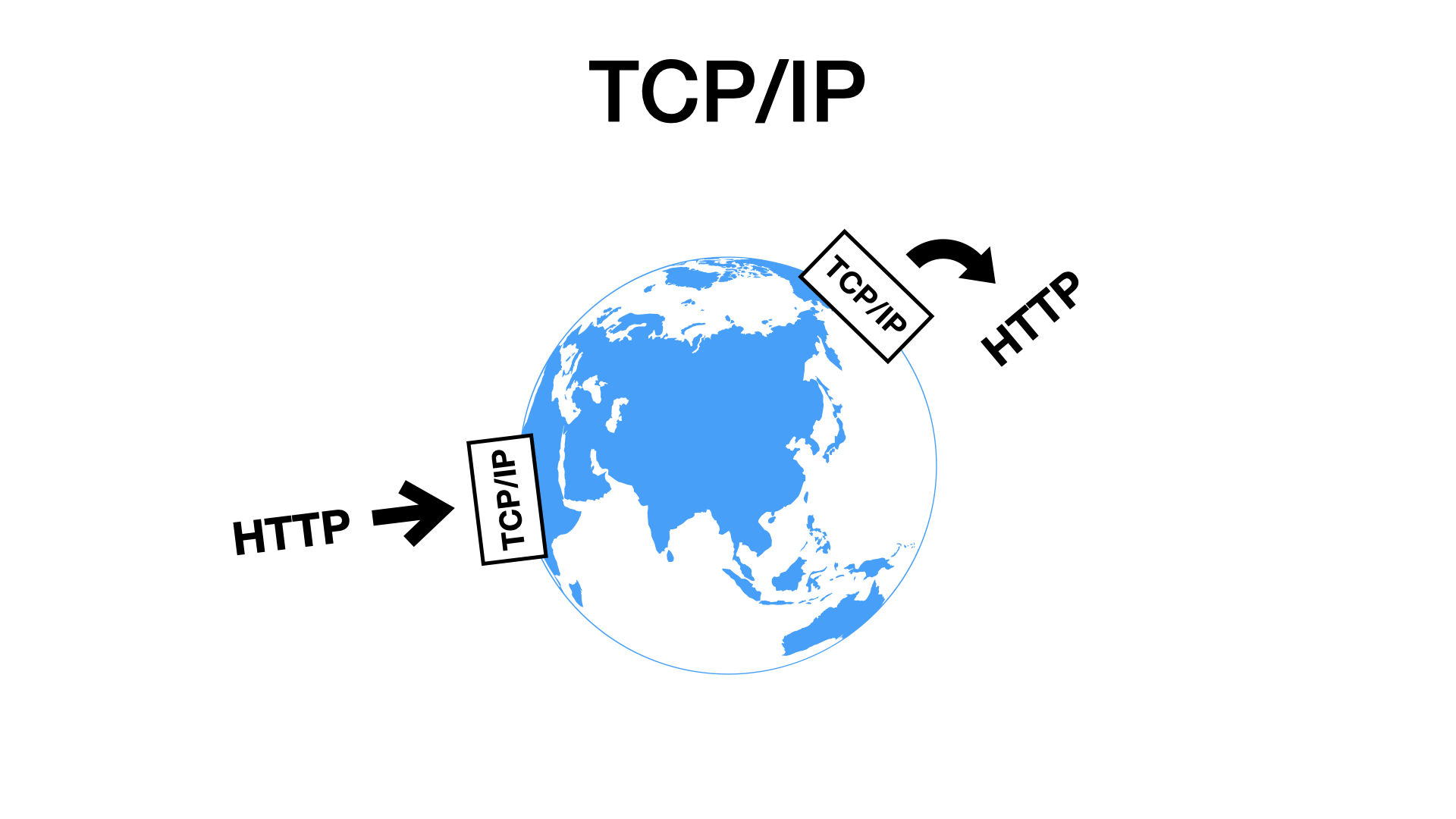

The internet is a complicated beast. But the good news is that we mostly don’t care because all the complexity is hidden from us by something called TCP.

TCP is like a magic box. You write a message to the box, and on the other side, somebody can read it from their box.

If something gets lost on the way, TCP retransmits it. If something is delayed, TCP reorders it. The TCP layer handles possible network glitches. You get a reliable byte stream and don’t have to care how it is implemented.

You can write anything to a TCP socket. In this talk, we care about HTTP. But TCP can transport anything. For example FTP. Or emails using POP3 or SMTP. Or anything else.

The TCP protocol is older than HTTP. It is here from the '80s, with early implementations from the ‘70s. And we use it since then.

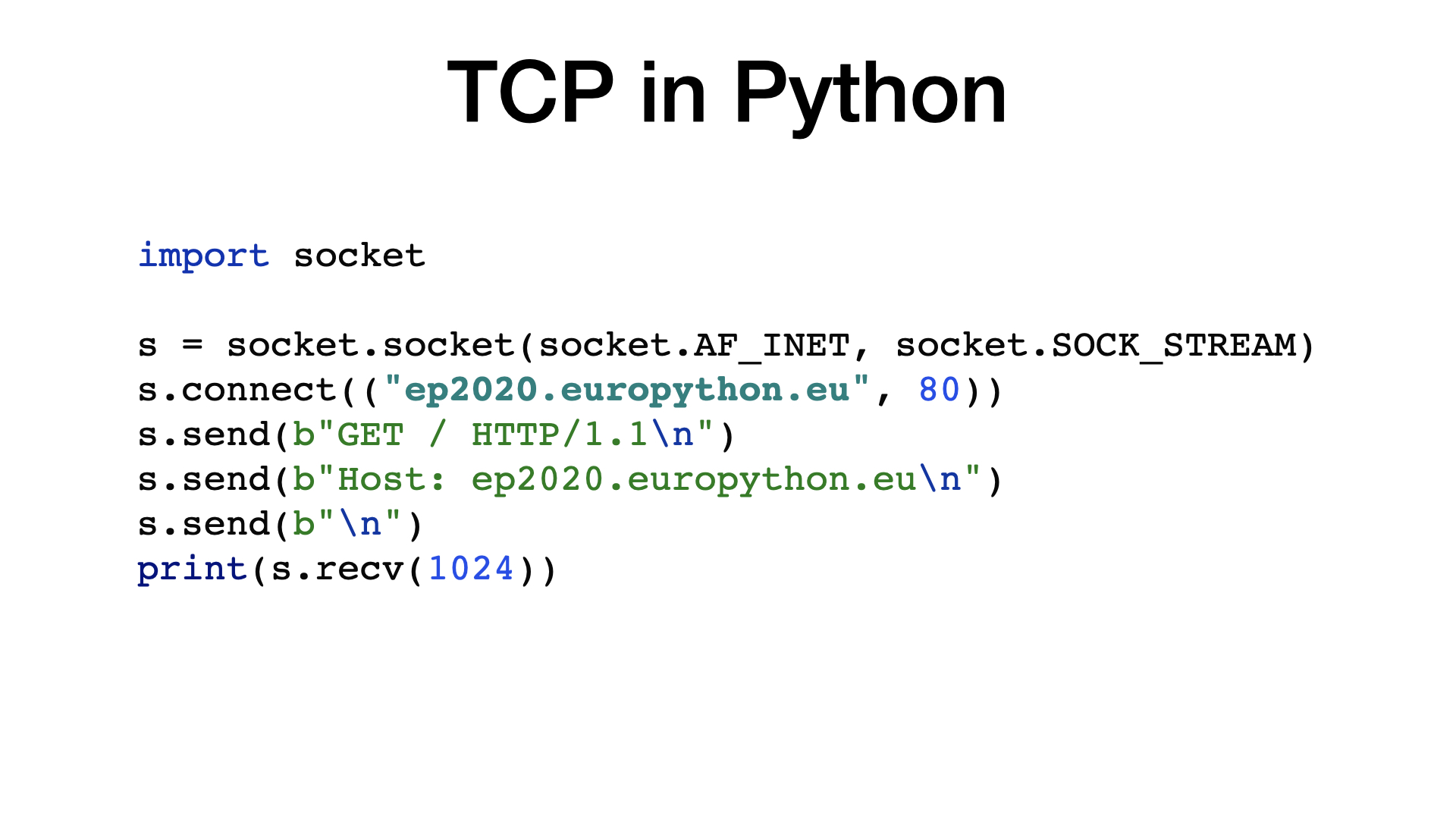

TCP is implemented in your operating system. Thanks to that, you can open a TCP connection using almost any programming language, including Python, with a few lines of code only.

If you are used to high-level APIs then the TCP sockets may look complicated, old fashioned. But when you consider that this abstraction gets your request over the internet, and how old the API is … it’s pretty amazing.

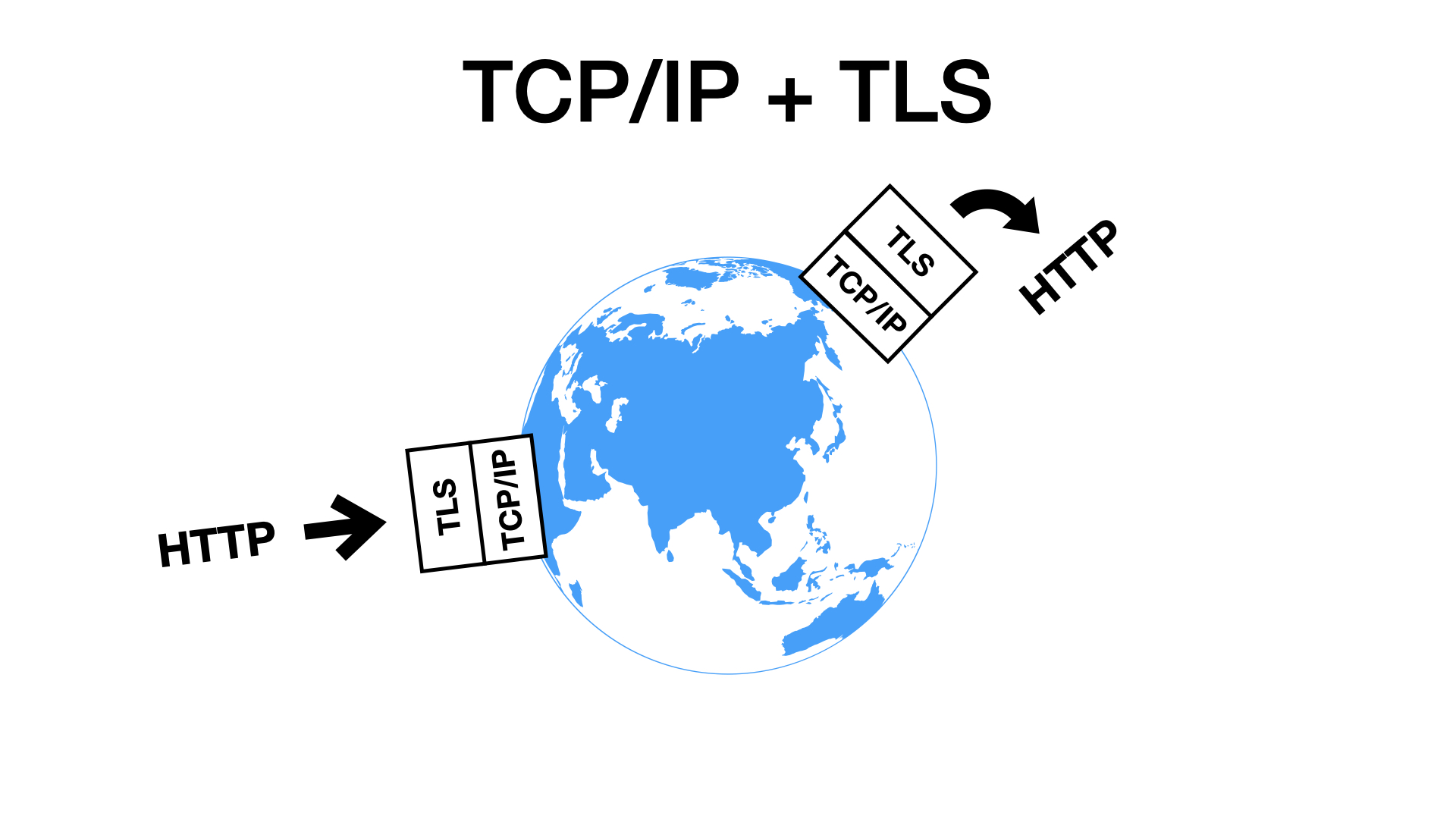

We all already know that HTTPS is HTTP over TLS. TLS is just a second box that sits on top TCP.

It encrypts everything that goes in and decrypts everything that goes out.

TLS prevents eavesdropping and data tampering. That means that nobody can read or change your payload.

Similar to TCP, TLS is also protocol independent. It can transfer HTTP or other protocols. For example, FTPS is FTP over TLS over TCP.

What would be our options, if there was no TCP?

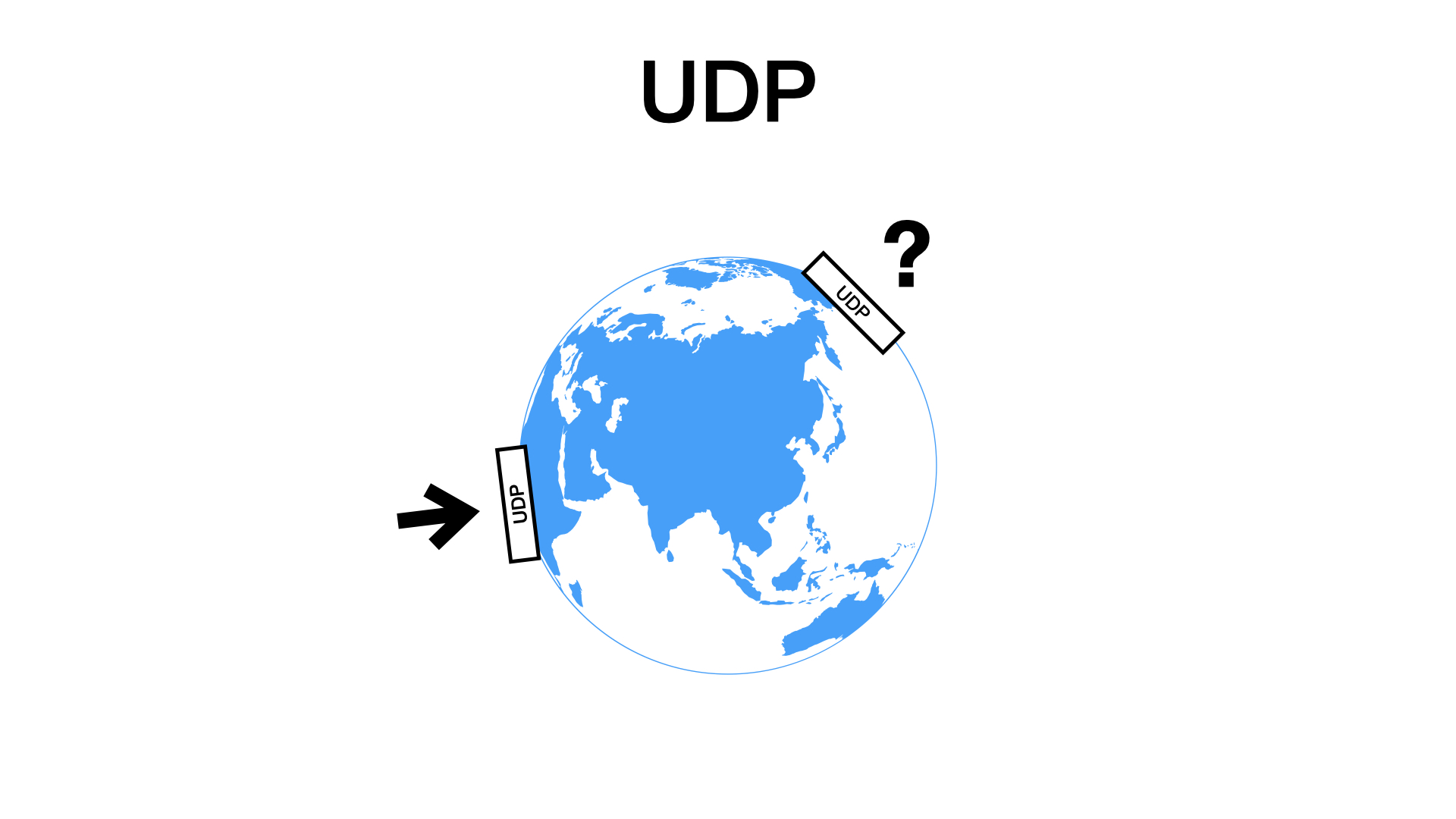

TCP has a younger and less clever brother called UDP. UDP is primitive compared to TCP.

With UDP, you send packets and they may or may not arrive. And if they arrive, they can appear in any order. It means that your app has to handle any network glitch.

Typical UDP use cases include DNS, real time streaming, or online gaming.

If we had no TCP, we would have to use UDP. There are other transport protocols besides TCP and UDP, but it would very difficult to use them on the Internet, because they are rarely supported.

If we had to use UDP and we wanted something reliable then we could build something like TCP on top of UDP. You know, develop some abstraction that can be reused.

Hmm, maybe, that may not be the worst idea. Maybe, the thing that we will build on top of UDP could be better than the original TCP.

Say hello to QUIC.

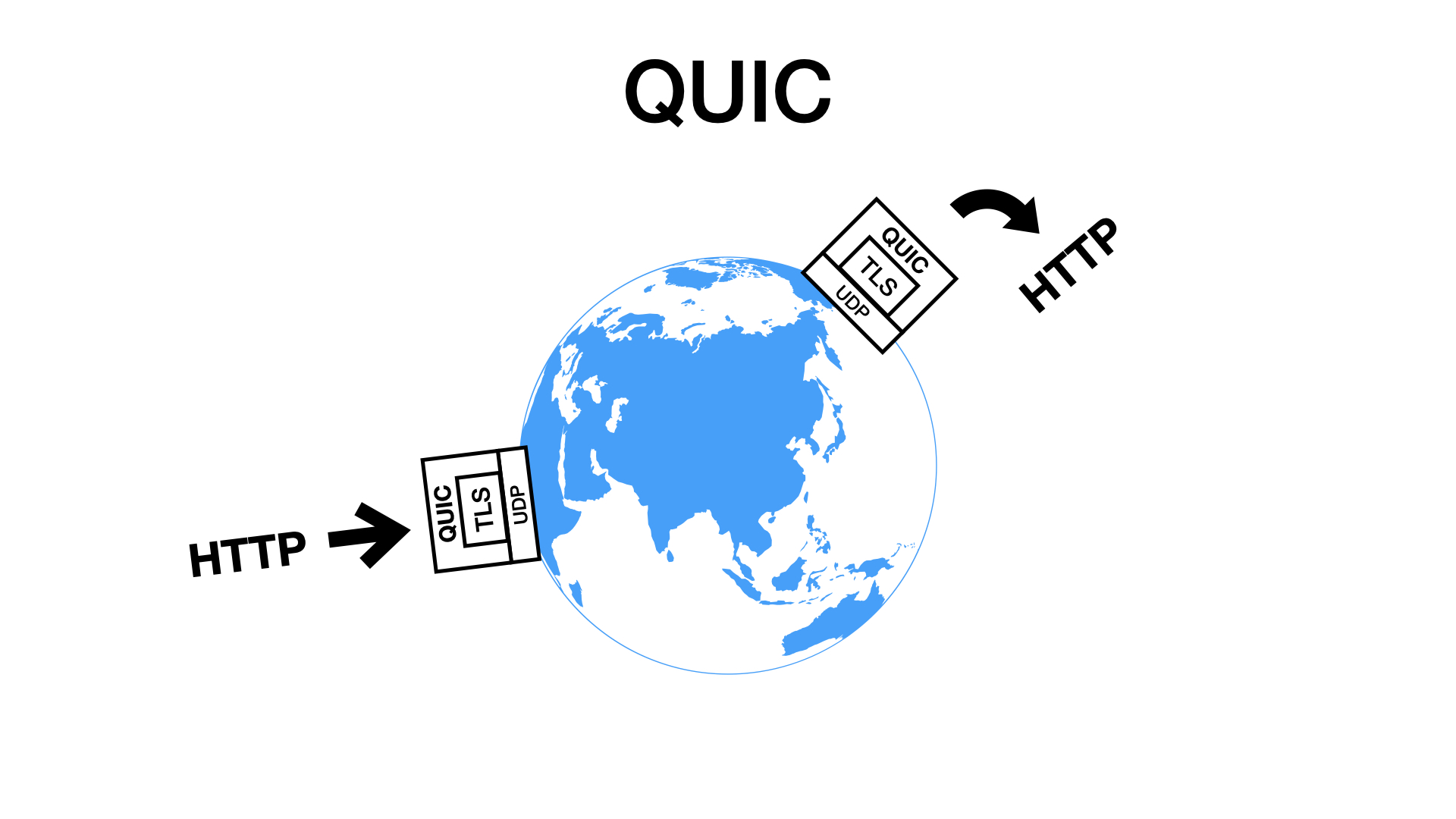

QUIC is a new transport protocol designed in Google. It emulates and improves TCP, on top of UDP.

QUIC is TCP redesigned and rewritten from scratch.

QUIC implements everything normally provided by a kernel of an operating system in user-space.

Similar to TCP, QUIC retransmits and reorders packets, so you get reliable byte streams.

QUIC has TLS built-in. Everything delivered using QUIC is encrypted by default.

QUIC can be used to deliver any message. At least in theory, or in future. In practice, today, QUIC is used exclusively with HTTP.

HTTP/3

That gets us to the third HTTP version.

HTTP/3 is HTTP over QUIC. HTTP version 3 is similar to HTTP version 2, but it is delivered using QUIC (using UDP) instead of TCP.

That’s what makes HTTP/3 so interesting. It uses a completely different transport protocol under the hood than was used since the ’90s.

But one does not rewrite TCP just for fun. The new transport layer should give us some advantages. So, what they are?

The main advantage of QUIC is that it is multiplexed. It supports many independent logical flows within a single connection.

Wait! Did you listen to me? Didn’t I told you that the multiplexing is the main advantage of HTTP/2?

Yes, I did. Let me explain the difference.

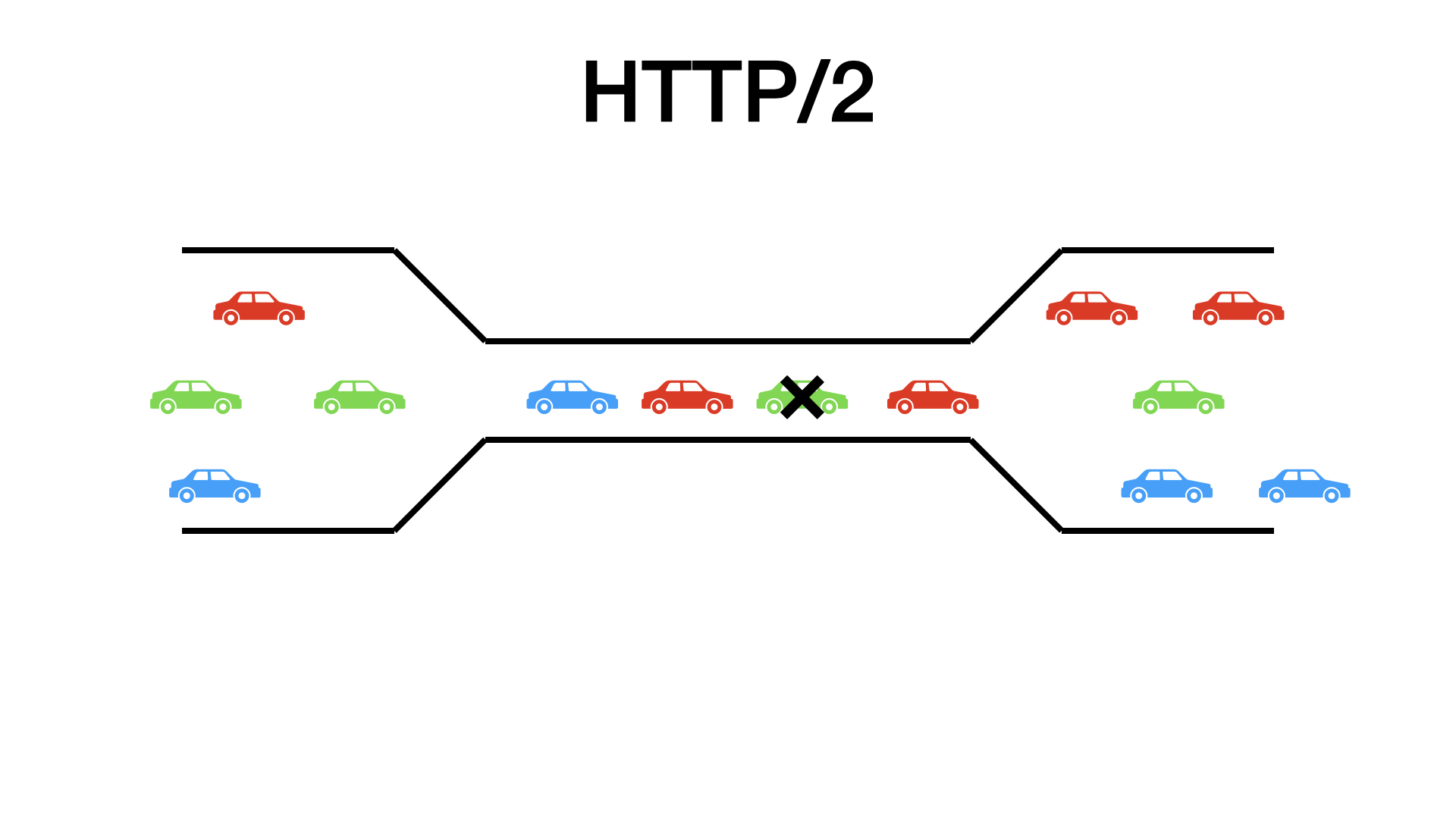

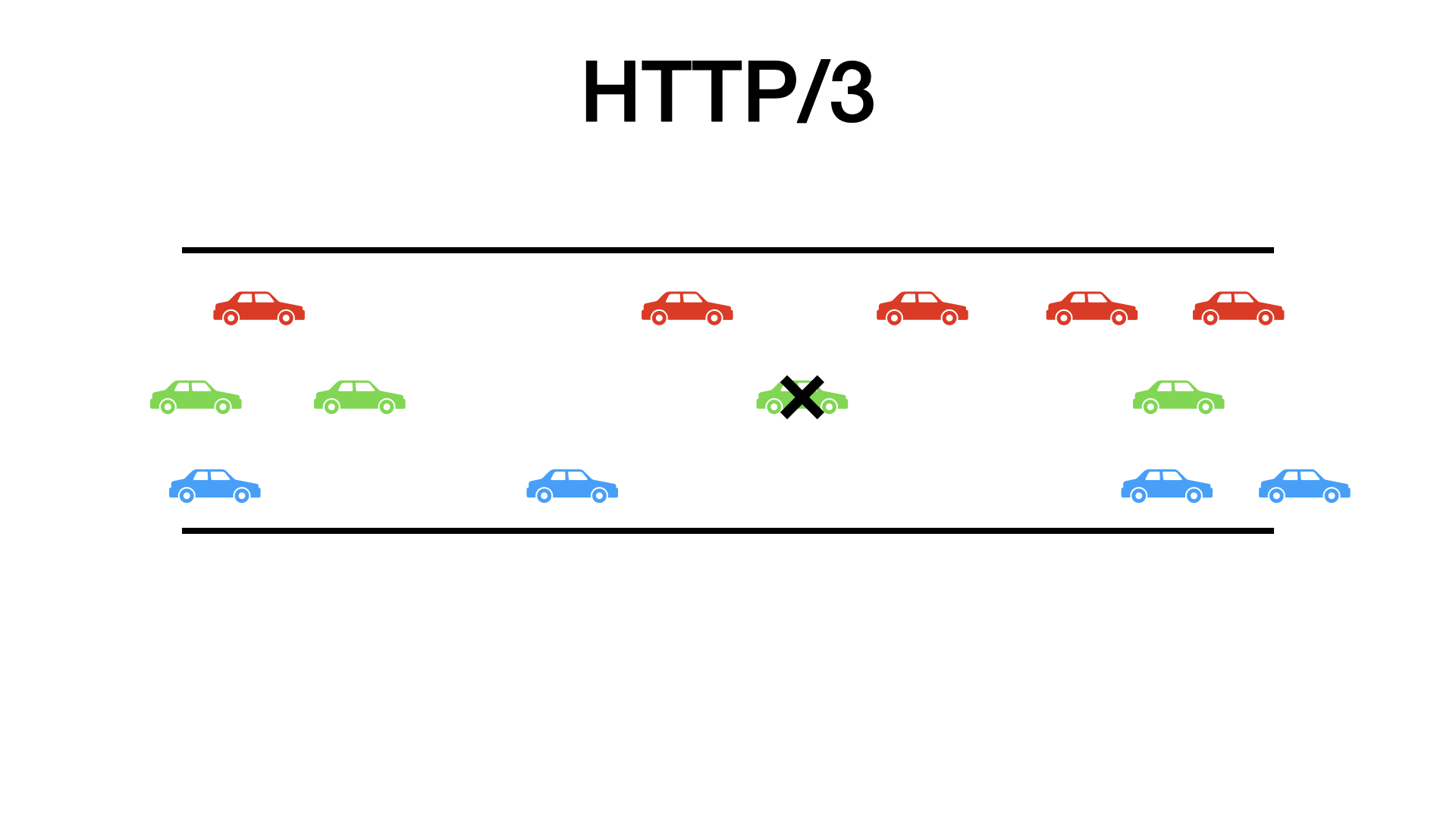

HTTP/2 is multiplexed, but the underlying TCP is not. When you are downloading multiple objects in parallel, HTTP/2 has to serialize downloads to a single TCP stream. A that TCP stream is guaranteed to deliver in order.

And now imagine what happens when one packet is lost?

Everything is blocked, everything has to wait for that one packet. One lost packet blocks everything.

Your OS can have an object in its buffer, but you cannot access it because TCP promised to deliver it after some lost packet.

This is called TCP head-of-line blocking.

With HTTP/2, we got rid of the HTTP head-of-line blocking, but we got the TCP head-of-line blocking instead.

QUIC supports independent streams. QUIC, unlike TCP, knows what belongs to what stream.

Thanks to that, when one packet is lost, only objects delivered in that packet are blocked.

This can improve performance and user experience on lossy connections.

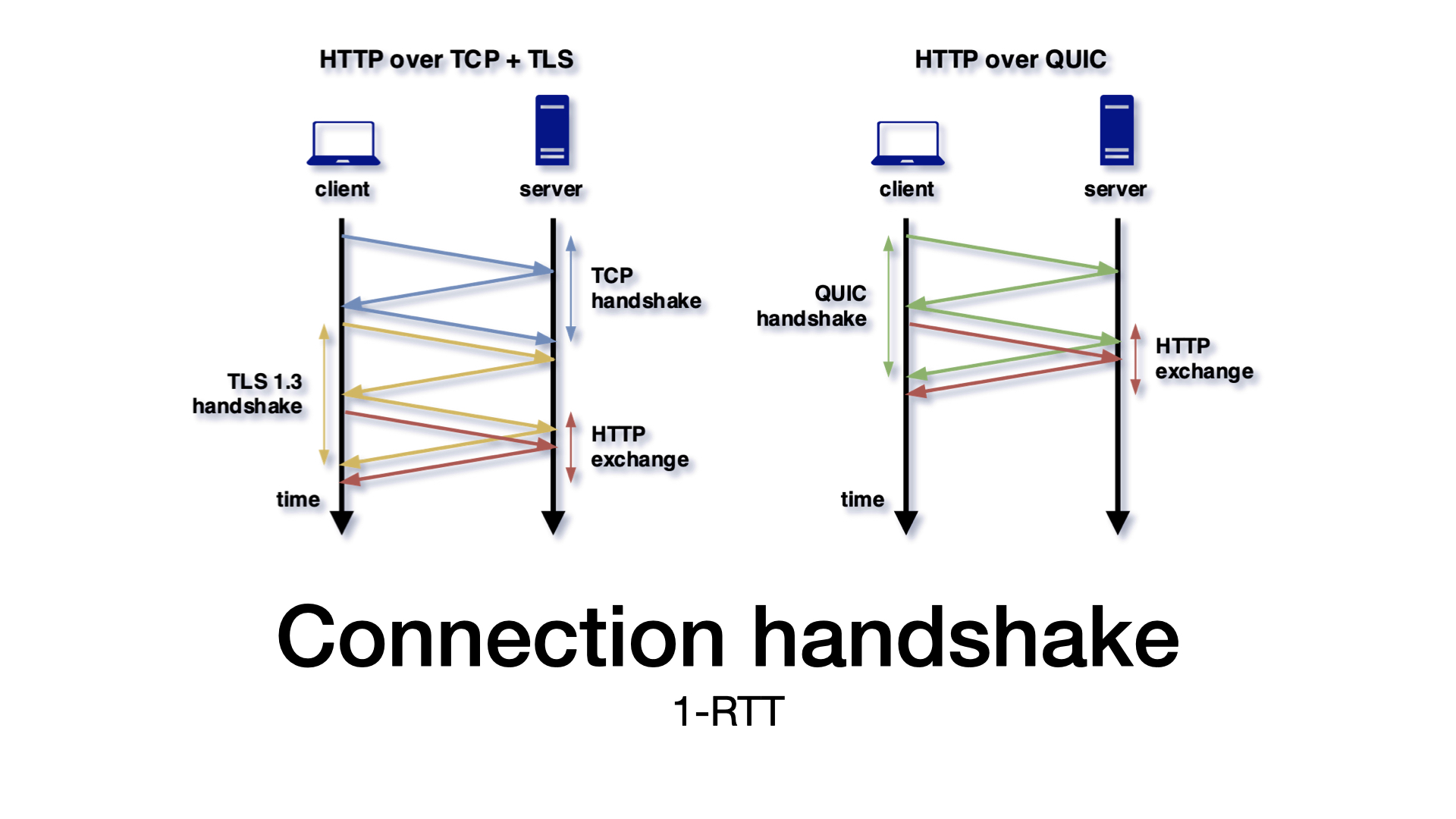

Another important QUIC advantage is that it offers a faster connection setup. I don’t want to go into much details here.

The problem with TCP is that you have too many layers. You need one roundtrip to setup a TCP connection, at least one roundtrip for TLS handshake, so useful data can be delivered in the third roundtrip at best.

With QUIC, you have one layer less. The QUIC handshake and the TLS handshake happen at the same time, shortening the connection setup time.

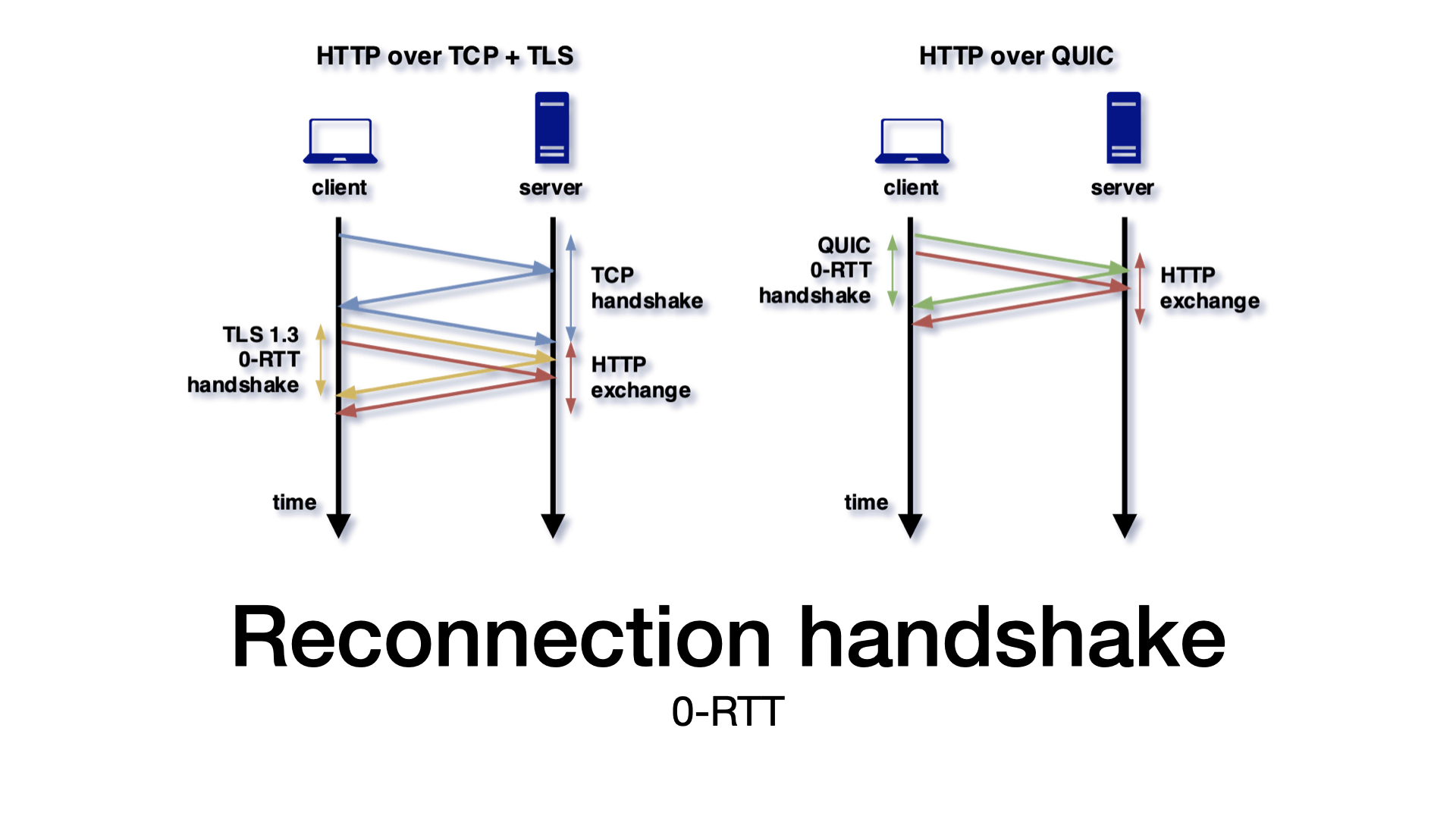

But we can do even better.

QUIC also supports so-called 0-RTT. With the 0-RTT handshake, we can include data with the handshake. There is no extra roundtrip that you have to wait for.

This is possible when a client can reuse a secret from before, which means that 0-RTT is available for reconnections.

There is one danger with the 0-RTT handshake. The 0-RTT handshake makes replay attacks possible. It should be enabled for idempotent requests only.

To be fair to TCP, I have to mention that there is a TCP extension called TCP Fast Open that should made 0-RTT connection possible. But I will explain in a minute why it does not work in practice.

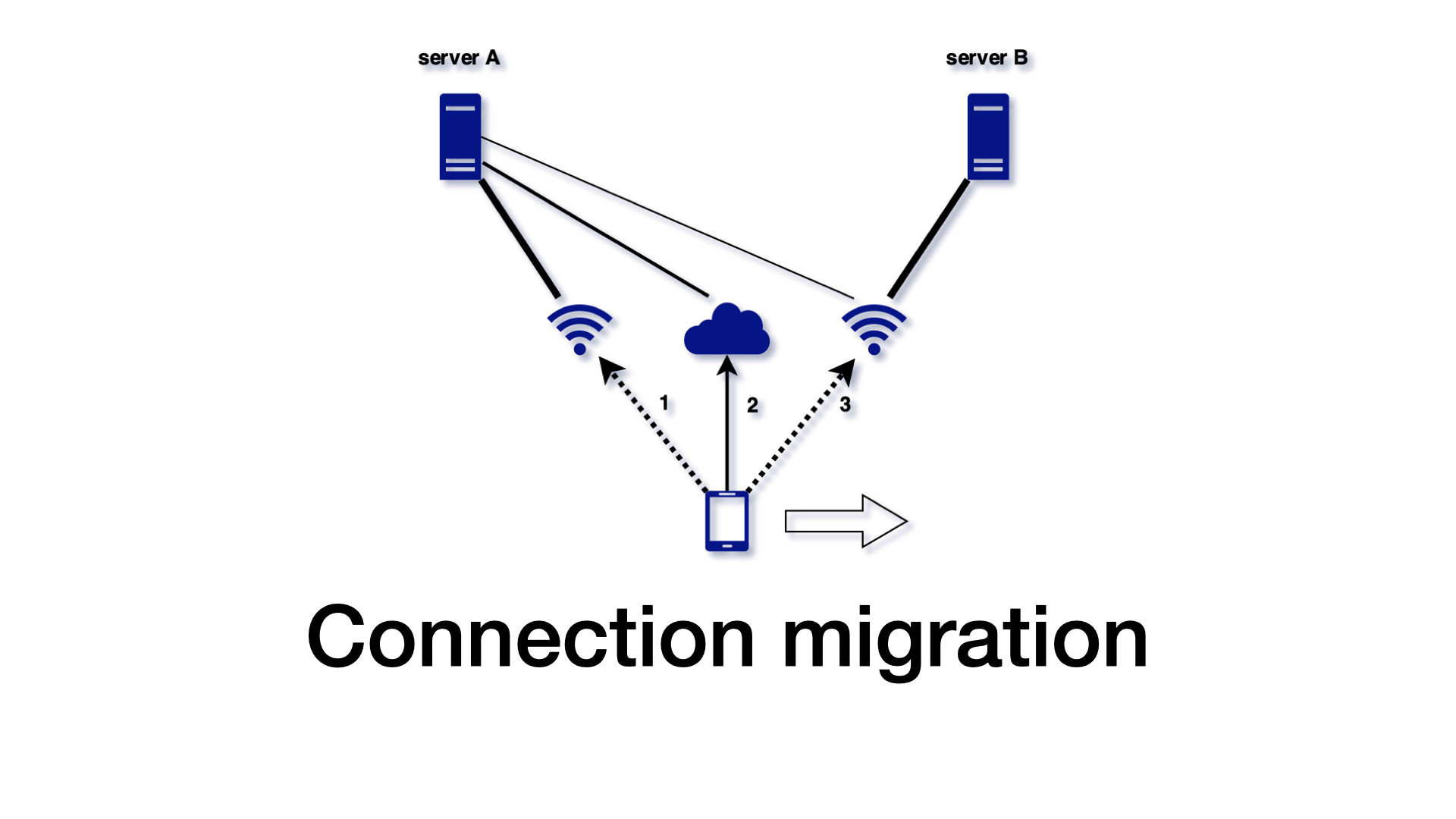

QUIC supports connection migration.

Unlike TCP, QUIC does not identify a connection using an IP address and a port number, but it generates a unique connection ID instead.

Thanks to that, it is possible to switch between (for example) cell signal and wifi without aborting connections or requests.

I already mentioned that QUIC is always encrypted. What is important here is that QUIC encrypts not only your message, but also its metadata used for traffic control. With TLS over TCP, your message is encrypted, but TCP headers stay plaintext.

The encryption is important for your privacy, obviously, but not only for that. It is also important for future development of QUIC.

One problem with the internet is that many middleboxes are trying to help you. Various devices think that they understand the protocols that you are using and interfere with your traffic. They claim that it is in your interest.

But as these boxes get old, their understanding is getting worse and many middleboxes are likely to cause more harm than help.

For example, old boxes can assume that TCP port 80 is HTTP/1 and break any HTTP/2 traffic. That’s the reason why we use HTTP/2 over TLS only. Because when something is encrypted, the middleboxes don’t see it.

TLS can hide HTTP from the middleboxes, but the problem remains because the boxes still see the TCP layer. I mentioned that TCP FastOpen is an extension of TCP that should allow a faster connection setup. It is something like 0-RTT in QUIC. TCP FastOpen was never widely adopted because the middleboxes can consider this extension invalid and drop traffic using it.

By encrypting everything in QUIC, including traffic control, including headers, we refuse any help from the middleboxes. Thanks to that, the QUIC protocol can evolve.

We say that QUIC avoids ossification.

There are other advantages.

QUIC offers faster development. Because QUIC code is in user-space, not in OS kernel, new versions can be deployed with any software upgrade. When you get a new browser version, you can get a new QUIC version.

QUIC also offers better options for congestion control and loss recovery. This is a very interesting problem and our protocol optimization team spends a lot of time on that. The idea is that for the best performance, you want to send data fast enough to utilize available bandwidth but not too fast to cause packet loss.

No technology comes with advantages only, so there are challenges for QUIC too.

From my point of view, the main problem with QUIC is that it is new. For example, internet providers may not expect regular traffic over UDP. They can rate limit it, or even block it.

QUIC is not implemented by operating systems, so apps have to provide their own implementation. Quality of such implementations can be questionable when we compare it to the TCP stack optimized since the ‘80s.

Probably the most discussed challenge is the CPU usage. That’s an area where development and optimizations can help.

HTTP/3 support

Let’s move from theory to practice.

What you can try today? What’s the current state of HTTP/3?

I hope that you are curious, but before I get to it, I have to clarify an important distinction. The distinction between two QUIC implementations.

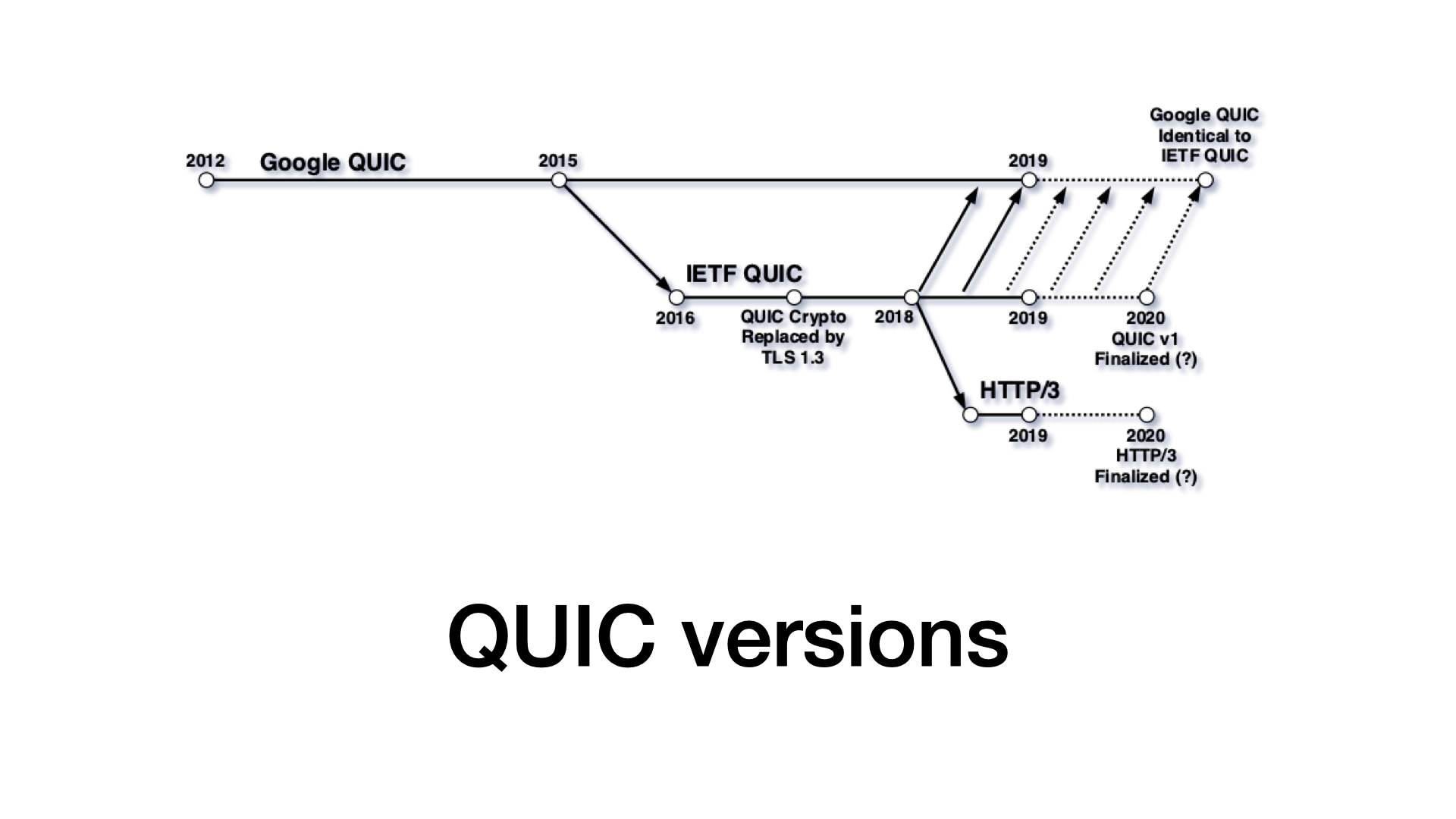

We have Google QUIC and IETF QUIC.

- Google QUIC (or gQUIC) is open-source, available in Chromium codebase. It is not standardized, and it is evolving with Chrome versions.

- IETF QUIC (or QUIC with no prefix) is the upcoming standard. An IETF working group is finalizing it, so we will hopefully have an official version soon.

These two versions are not compatible, and support for the two implementations differs. So, how are these two versions supported?

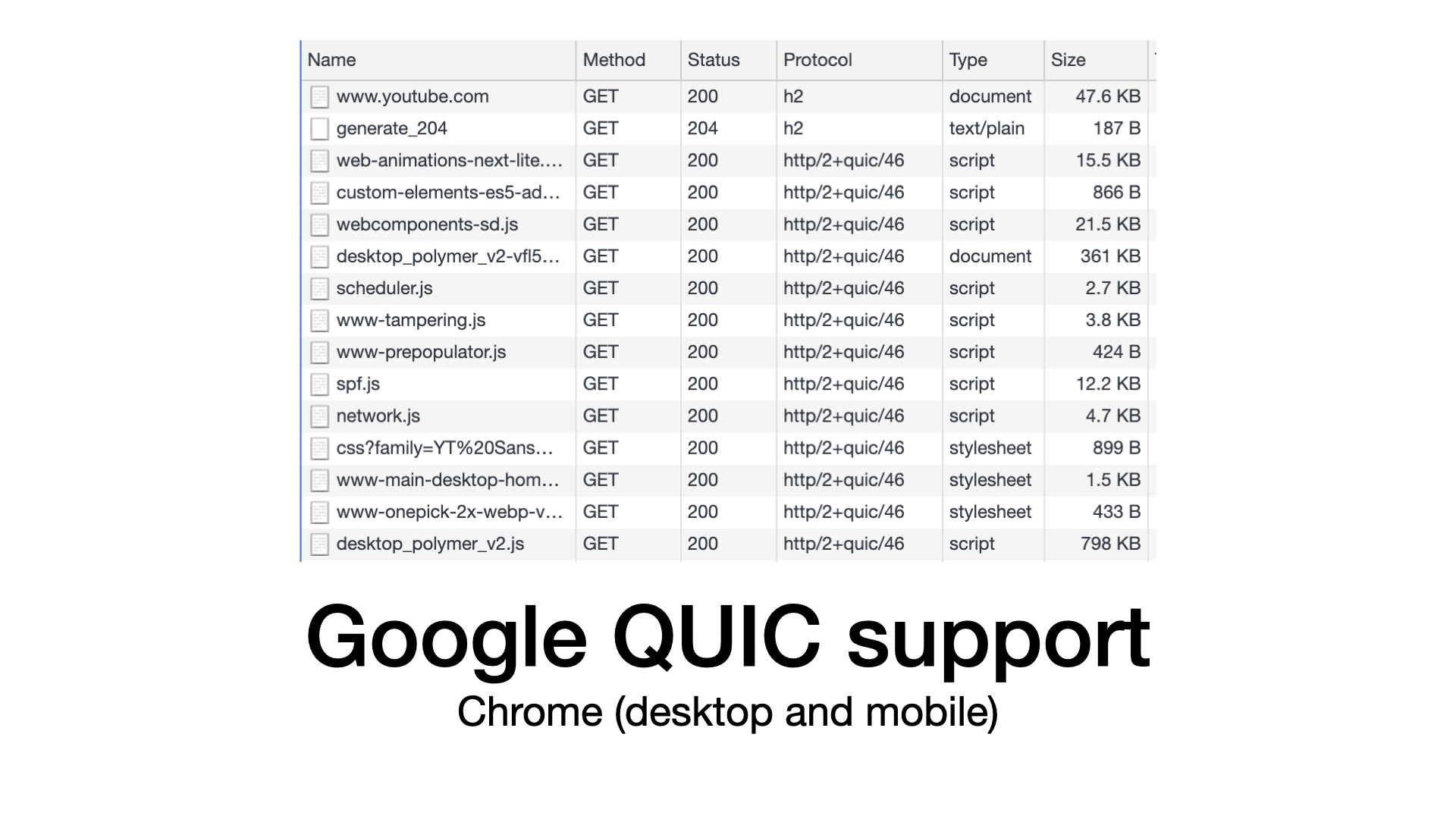

Let’s start with gQUIC. It is quite likely that you are using Google QUIC already today.

When a Chrome browser connects to a Google server, it will use QUIC in most cases. Chrome is a quite common client and Google services also popular, so a significant portion of internet traffic uses QUIC already.

In Akamai, we enabled Google QUIC for all media customers. That’s a lot of traffic too.

But besides that, I am not aware of any other large-scale deployment of Google QUIC. I heard rumors about closed deployments, even large-scale ones, but I have not noticed anything publicly available besides Google and Akamai.

But strictly speaking, Google QUIC is not HTTP/3.

And this talk is about HTTP/3. HTTP/3 is HTTP over IETF QUIC. We can expect support for IETF QUIC and HTTP/3 in all major browsers.

Firefox Nightly and Chrome Canary support IETF QUIC for some time already, and Apple announced HTTP/3 support recently.

Speaking about clients, special mention belongs to cURL, which has experimental support for HTTP/3.

cURL is not only a command-line tool but also a C library – libcurl. You can find this library in almost everything. If your car will speak HTTP/3 in the future, it will be most likely thanks to libcurl.

If will want to learn more about HTTP/3 then I recommend posts and talks from Daniel Stenberg, the author of cURL.

On the server-side, you can notice that CDNs (content delivery networks) do not want to miss this new technology. CDNs are investing into QUIC and HTTP/3. If you are using their service, you may get HTTP/3 without an extra effort.

From my quick research, it seems that some CDNs offer HTTP/3 today. From my point of view, this support must be experimental at best, because you cannot optimize a protocol that has no production traffic. And HTTP/3 traffic has to be close to zero because browsers don’t support it yet.

But you can try in specific scenarios or if you want to prepare for future.

If you prefer a DIY approach, you may appreciate that HTTP/3 support for Nginx is in progress. The code is developed in a separate "quic" branch, so I would not consider it stable, but they are likely to get there.

Just be aware that enabling HTTP/3 is just the first step. I know something about that because our team is looking for the best QUIC configuration for the last few years. We have deployed a lot of improvements, but we are still not done.

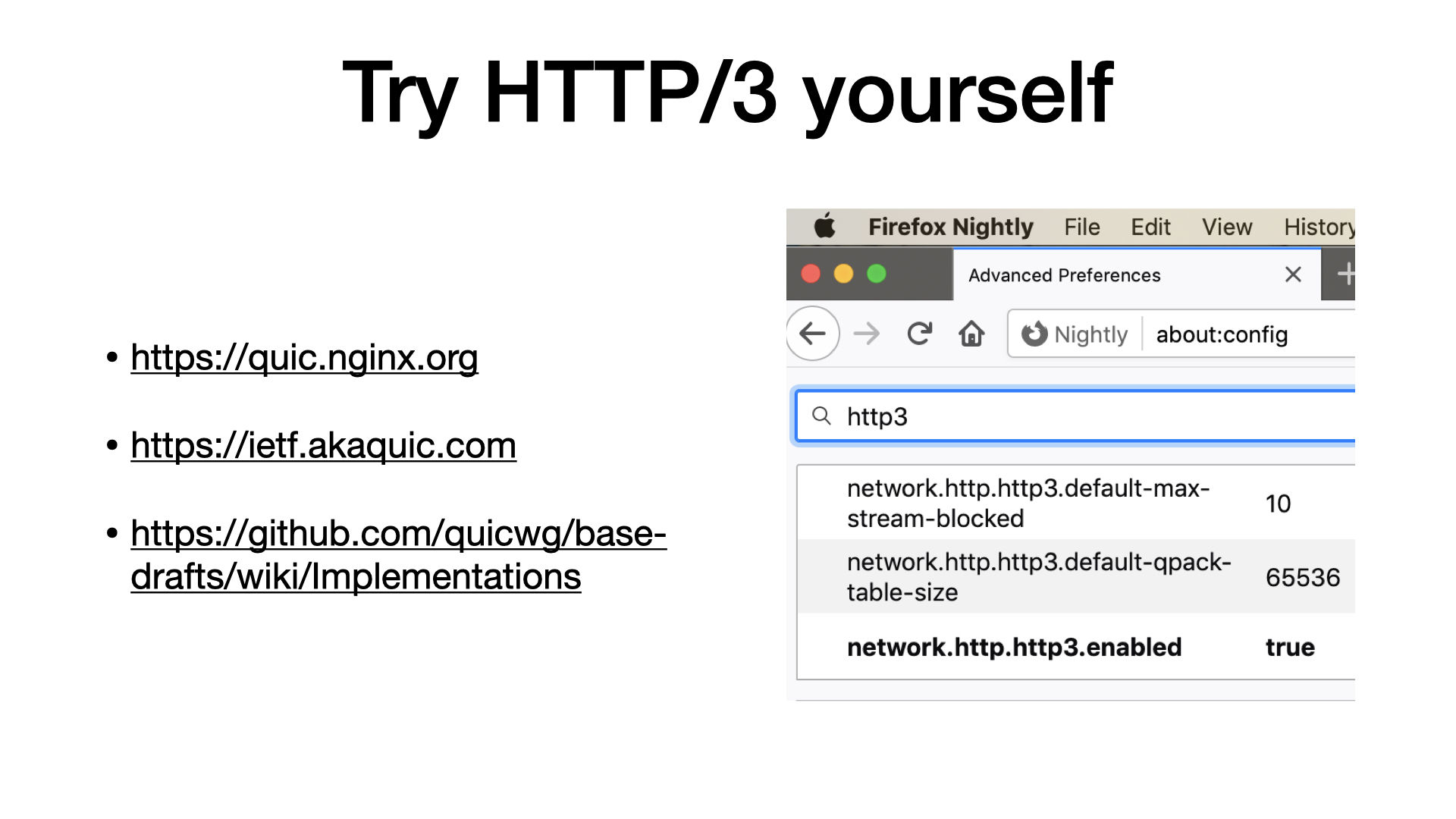

To see a list of all HTTP/3 implementations, visit the Github account of the IETF QUIC working group.

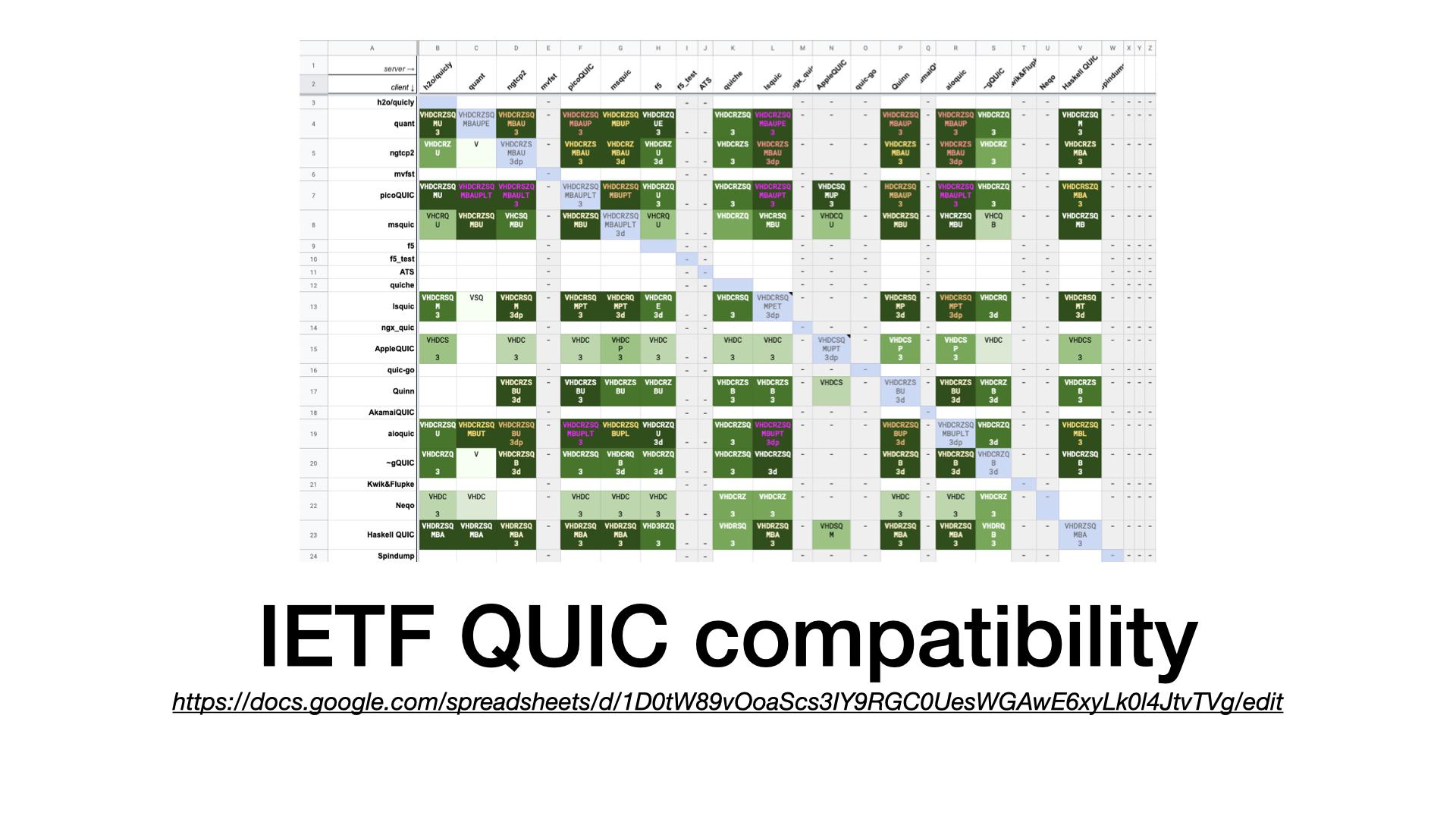

There is also a compatibility table documenting what clients work with what servers. The table is pretty green these days, which is very promising.

I you want to try HTTP/3 yourself, download Firefox Nightly, enable HTTP/3 in its configuration (in the about:config page), and visit one of the test pages.

Nginx provides a nice test page. Akamai offers one. Others can be found at the wiki of the IETF working group.

What versions to choose? Google QUIC or IETF QUIC?

You know the answer. If you want to develop anything today, go for the standard IETF version. Its support is experimental only today, but this should get much better soon.

Akamai supports QUIC since 2016. We deployed the proprietary Google version and we are updating it regularly. This was the only way back then how to get QUIC traffic, how to offer all the advantages to our customers. And it still the only way today.

But in practice, Google is back-porting the IETF standard to their proprietary implementation. Google gQUIC is approaching IETF QUIC compatibility with every new version, so it’s likely that both versions will converge in future.

This is similar how HTTP/2 was born. There was a proprietary protocol from Google, called SPDY, that became standardized as HTTP/2.

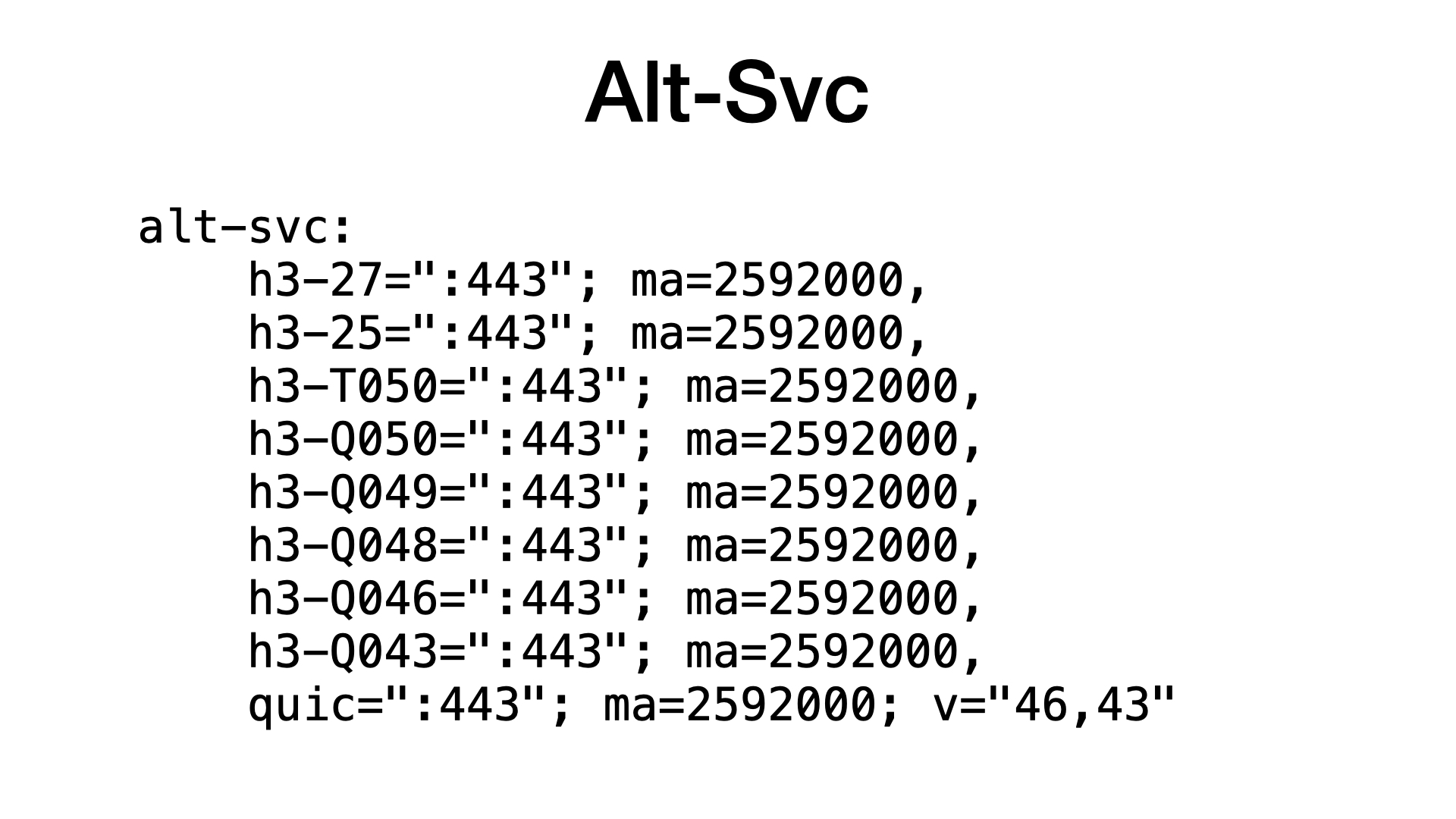

If you want to check what HTTP/3 or QUIC versions are supported by some server, look for an Alt-Svc header.

The Alt-Svc header is sent by servers to inform clients that they can switch to HTTP/3.

This is different from switching from HTTP/1 to HTTP/2. Because both HTTP/1 and HTTP/2 run over TCP, so you can open a TCP connection and then negotiate what HTTP version to use. With HTTP/3, a browser has to open a separate QUIC connection.

BTW the Alt-Svc header can be quite useful for my job, for the protocol optimization. All Akamai servers support QUIC, but we send the Alt-Svc header only when we believe that a client will benefit from it.

HTTP/3 and Python

Python. This is a Python conference, isn’t it?

If you want to try HTTP/3 in Python, go for the aioquic library. It is the only Python library mentioned at the IETF QUIC working group wiki.

I tried it and it works nice.

Yes, tried it, that’s all. I write Python a lot, but my code does not use HTTP/3 yet.

Why? Let’s ignore the fact that Google QUIC is available in Chromium only, and deployments of IETF QUIC are still experimental.

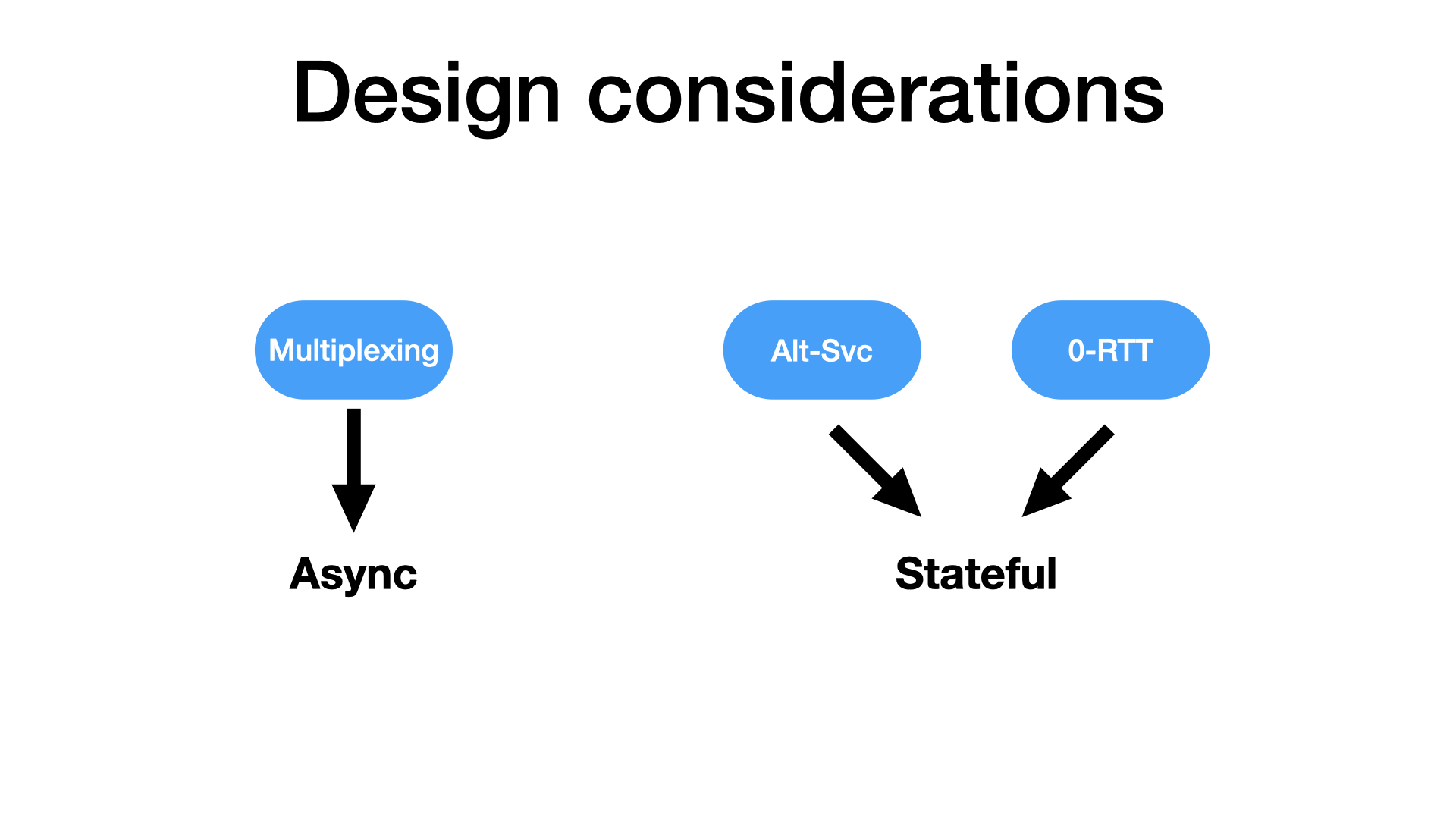

Let’s discuss how I can use HTTP/3 once it is standardized and supported. Because I am afraid that to use advantage of HTTP/3, I have to change how I write my code.

To take advantage of HTTP/3, we have to change how we write our code.

I told you that the main advantage of QUIC is multiplexing. To use that, I have to issue concurrent requests. Which leads me to async programming.

Another issue is the Alt-Svc header. Most servers will not support HTTP/3 even after it is standardized. So, our clients will have to remember what servers sent the Alt-Svc header to know where HTTP/3 is available. The client will need some storage.

And some storage is necessary for the 0-RTT support too. To take advantage of 0-RTT, a client has to remember secrets between sessions.

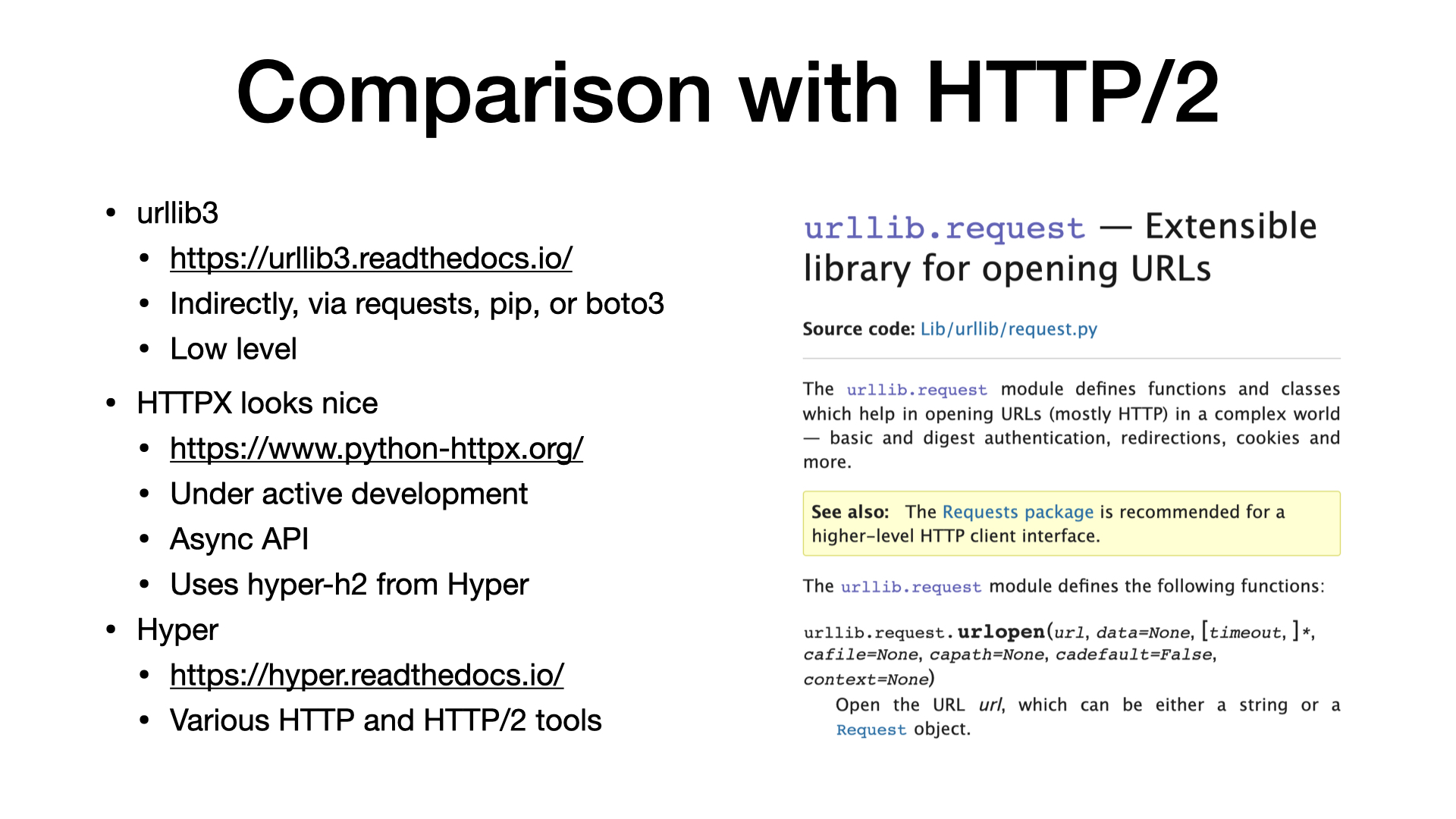

Before speaking about migration to HTTP/3, we should talk about HTTP/2 first. Because I guess that most of you do not even use HTTP/2 in Python yet.

The most common Python library for issuing HTTP requests is urllib3. You are likely to use it, even if you are not aware of it. It is a dependency of popular libraries like requests or pip. And urllib3 unfortunately does not support HTTP/2.

If I had to choose a library for HTTP/2, I would use HTTPX. It has nice API similar to requests, but unlike requests, it supports HTTP2 and offers async invocation.

For HTTP/2 support, the HTTPX library uses the H2 package from the Hyper project, which you will probably find when you google for Python and HTTP/2.

To use HTTP/3 from Python, I would like to have a library similar to HTTPX. A library with an asynchronous requests-like API.

I need a library that chooses an appropriate HTTP version for me. When I write Python code, I do not want to care about HTTP versions.

Until we have a library that abstracts HTTP versions, most of us will probably still use HTTP/1 from the ‘90s.

Conclusion

Let’s summarize what I have presented to you.

We have a new transport protocol called QUIC. It is a TCP replacement build on top of UDP.

HTTP/3 is similar to HTTP/2 but on top of QUIC instead of TCP.

For practical usage, I want to be able to issue HTTP requests without caring what version will be used. My client should take care of that. Similar to a browser, which does not ask me what HTTP version to use.

I like a view that HTTP is only one; and the HTTP versions are just different mappings from the one HTTP to the underlying transport layer.

HTTP/1 and HTTP/2 map the HTTP concept to TCP, and HTTP/3 maps this concept to UDP.

Should you care about HTTP/3?

I would say that you should be aware that something important is changing. All the big players are involved in that.

The change is quite low level, so it may not affect you directly. But if you are programming for the web then you should at least keep an eye on it.

If you want to learn more, I already recommended talks and articles from Daniel Stenberg.

For more details or for a more critical view, look for a talk from Robin Marx.

And I should not forget about the IETF working group.

Thank you for your attention.

Links and resources

Further reading

- Daniel Stenberg: HTTP/3 for everyone. (Similar talk at Full Stack Fest or GOTO 2019)

- Robin Marx: QUIC and HTTP/3: Too big to fail?!

- Robin Marx: HTTP/3 and QUIC: the details

- IETF Draft: Hypertext Transfer Protocol Version 3 (HTTP/3)

- Chromium wiki: QUIC, a multiplexed stream transport over UDP

- Original QUIC draft from 2012: QUIC: Design Document and Specification Rationale

Implementaions

- Known Implementations at IETF QUIC WG wiki.

- IETF QUIC Interop Matrix

- QUIC support for nginx (demo site)

- Akamai HTTP/3 test page